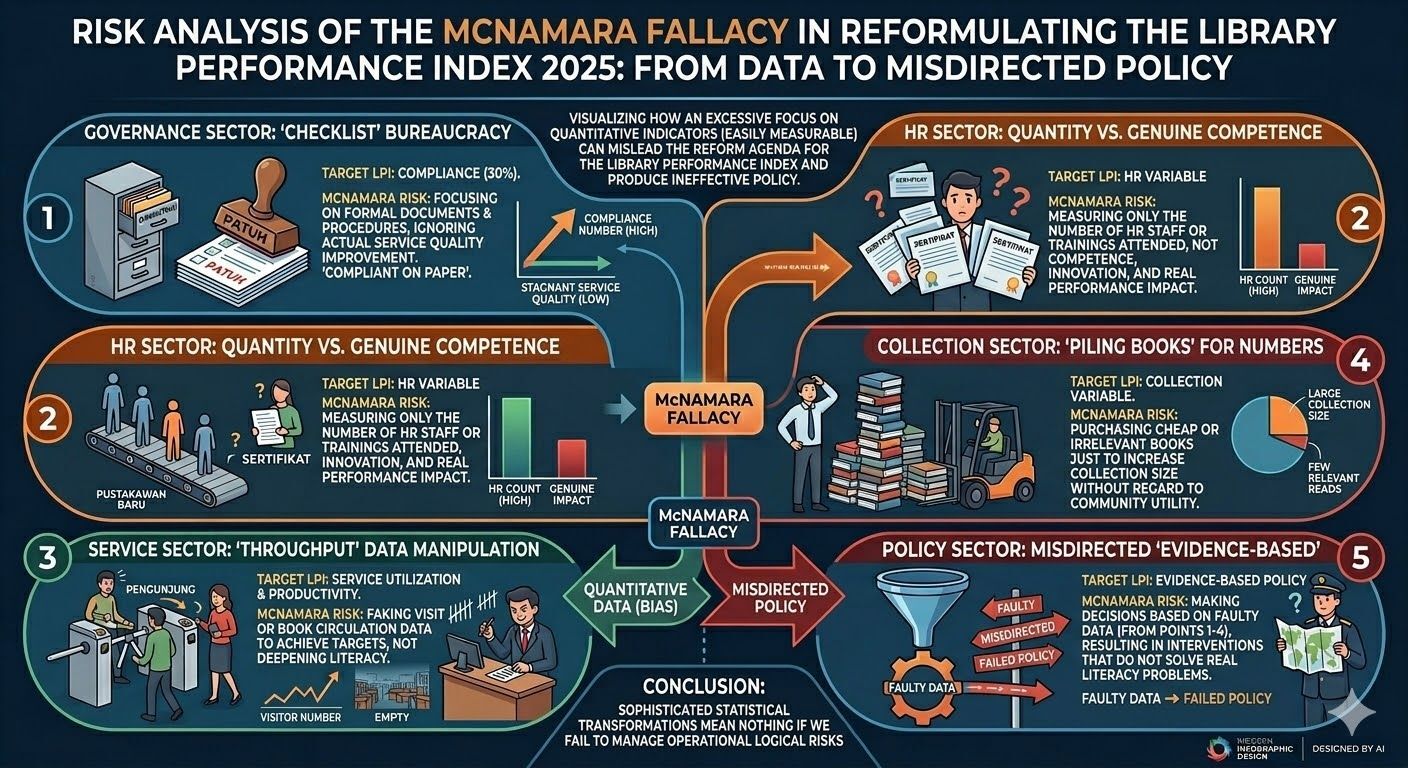

A hidden danger in library analytics — when numbers look right, but reality is wrong.

People often assume that working with national-scale library data is all about complexity.

They ask about machine learning models, pipelines, or transformations. But the real danger is much simpler — and far more dangerous.

In modern libraries, we are no longer facing a data scarcity problem. We have dashboards, reports, and indicators everywhere.

But here is the uncomfortable truth:

Counting books is easy. Measuring whether they are actually used, relevant, and impactful is much harder. When we focus only on numbers, libraries may optimize for quantity — not usefulness.

A high visitor number looks impressive. But does it reflect learning, literacy improvement, or meaningful interaction? Not necessarily.

Reports are measurable. Human experience is not. When systems reward documentation more than impact, libraries risk becoming efficient on paper—but ineffective in reality.

When metrics drift away from reality, decisions follow the wrong direction.

Because in the end, data is not the goal.

Impact is.